Versos AI has developed a comprehensive set of tools for transforming content owners’ video libraries into structured datasets, ready for licensing and delivery for AI model training at scale.

Video intelligence software developer Versos AI Inc has released the Video Library Intelligence Platform, a comprehensive set of tools for transforming content owners’ video libraries into structured datasets, ready for licensing and delivery for AI model training at scale. Versos AI helps video owners to correctly analyse, structure, package and license their video data.

AI Model builders depend on large-scale cloud service providers – hyperscalers like AWS, Google Cloud, Azure, IBM Cloud and Oracle Cloud – for training compute. These companies operate massive computing, storage and networking resources through distributed, connected servers and software. However the pre-requisites AI hyperscalers have begun to specify for data used to train and fine-tune AI models have become much more specific.

Scene-Level Video Intelligence

At the same time as launching their Video Library Intelligence Platform, Versos AI has formed a multi-year commercial partnership and data-delivery contract with CuriosityStream Inc, a streaming platform that specialises in licensing video data for AI.

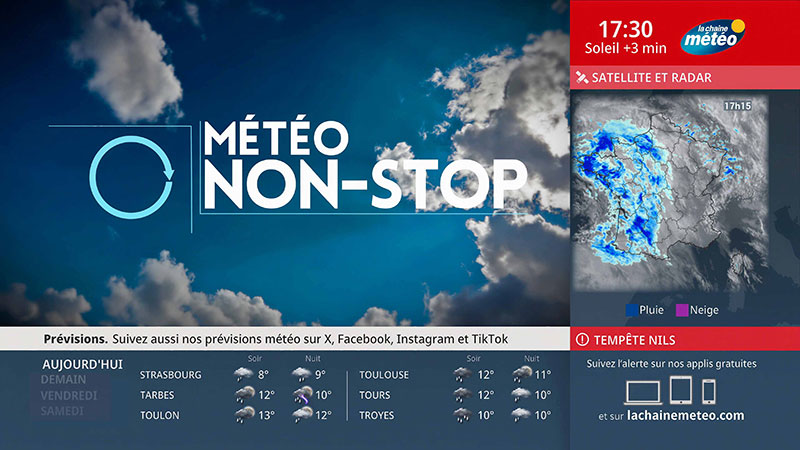

CuriosityStream specifically uses Versos AI’s technology to generate scene-level video intelligence that can be used to meet very precise AI dataset requirements. “We’re seeing extraordinary demand from major hyperscalers, AI innovators and tech leaders who recognize that high-quality, structured video and metadata are essential to training more capable, and context-rich models,” said Clint Stinchcomb, President & CEO of CuriosityStream.

“Our extensive library of over 2.5 million hours of video and audio, combined with the Versos AI best-in-class delivery and indexing capabilities, helps position CuriosityStream as the leading provider for next-generation AI models. We're excited to have a partner like Versos AI that enables us to accelerate growth as AI video data training reaches the next inflection point of the AI market boom.” CuriosityStream has also made an investment in Versos AI, reflecting its confidence in the technology.

High-Quality and Highly Structured

As AI models rapidly shift from language-based training to world models, demand is increasing for large volumes of video data that is both high-quality and highly structured. AI systems build world models of their environment, simulating how the world works, that the system can use to reason, predict and plan. Instead of just mapping inputs to outputs reactively, an AI with a world model can consider different consequences before acting, similar to a cause and effect approach, or to operating within the rules of physics or social dynamics.

“AI training has outgrown scraping data,” said Chris Keevill, CEO and Co-founder of Versos AI. “Video introduces significant complexity regarding structure and delivery at scale. Versos AI was built to manage that complexity end-to-end – so that content owners can identify new revenue streams and hyperscalers can train models with licensed datasets.

“Until now, there has been no purpose-built solution for converting unstructured video libraries into structured datasets suitable for hyperscale AI training. Versos AI closes the gap by making video data searchable, licensable and ready for model development. Unlike a broker or middleman, we are building common infrastructure, or data structure, that can be shared between libraries, forming a network or marketplace (see below) of libraries on a common platform.”

Breaking the Data Preparation Barrier

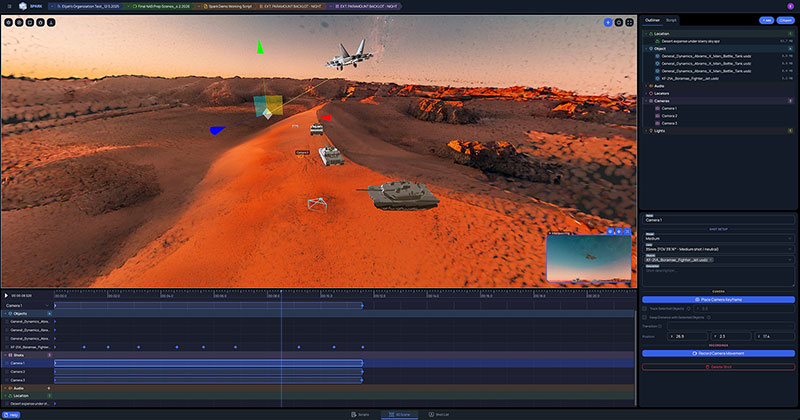

Currently when AI teams set out to train a model, well over half their time is spent preparing data – sourcing, organising and structuring massive unstructured video libraries - which creates a barrier in terms of time and cost. The Versos platform includes search, filtering and segmentation features so that users can build quite specific datasets – for example, by action, category, theme, language, format or resolution – down to scene level.

Using a video library as a dataset means structuring the video files in a particular way. It encompasses the raw media — the actual video files of varying resolution, frame rate, duration, codec and format – plus metadata describing each video. The metadata holds information like the title, duration, file size, creation date, source, language and technical information.

Equally important are annotations or labels, which make a video library useful specifically as a dataset. Depending on the application, videos might be tagged with annotations such as action or event labels, scene classifications, transcripts or captions, sentiment, speaker ID or timestamps identifying critical moments. Finally, an indexing structure will be in place to query and retrieve clips efficiently, for instance, by time range, label, scene or other attributes.

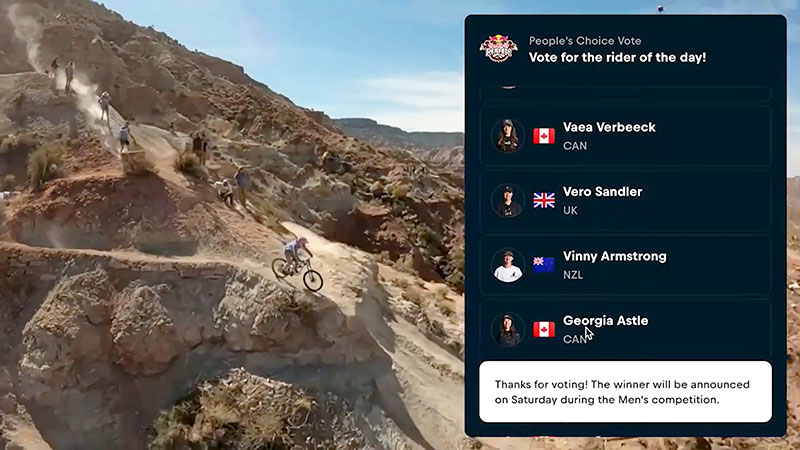

This kind of structuring makes a dataset useful for tasks like action recognition, object detection, video captioning, or content recommendation. Also, because trainers can differentiate between datasets verified to cover specific types of data such as sports, nature, human behaviour and so on – they can consciously train models with balanced, diverse material, thereby improving performance and reducing bias.

A further advantage for content owners is that their data stays in place when using the Versos AI platform; for buyers, transcripts, context tags, verified licensing and format details can be previewed before data is purchased.

From Library to Dataset – Versos AI Platform

Versos AI’s Video Library Intelligence Platform allows video library owners to analyse massive video archives, understand what content they have, determine eligibility for AI use, and assemble datasets based on precise technical and categorical criteria. Built on top of this intelligence layer, the Versos AI Video Training Data Marketplace serves as a controlled licensing network that enables datasets to be evaluated, licensed, delivered and tracked across AI developers, aggregators and content owners.

Archives are automatically mapped and organised by category, format, tags, rights status and other features. This allows AI teams to discover and license the data. Each asset is then linked to verified ownership and usage rights.

On the owners’ side, users can see every file, format, category and dataset deal in one internal dashboard without manual tagging. Footage across an entire archive can be discovered by category, type, format or keyword, making it possible to reuse, repurpose or reveal content at the right moment. Due to real-time tracking of assets and licensed content, operational visibility continues from ingest to licensing. www.versos.ai