DNEG VFX Supervisors talk about working as lead vendor on sci-fi thriller ‘Mercy’, visualising an AI justice system and forensics, complex environments and destruction of the very near future.

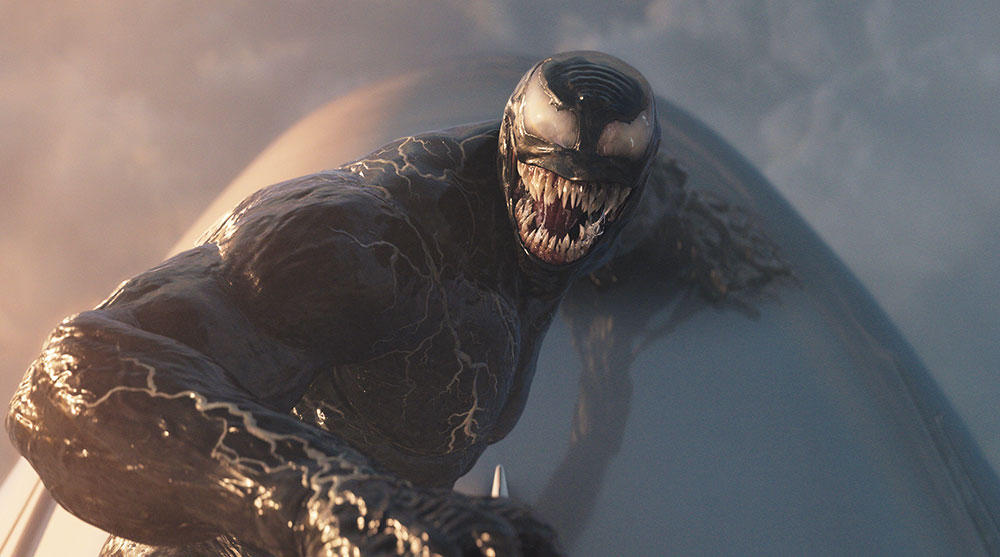

DNEG took the role of lead VFX vendor on the science-fiction thriller Mercy from Amazon, and oversaw the full stereo conversion. A project that needed extensive visual effects across most sequences, Mercy starts with a familiar premise – a hero’s journey set in a dystopian future, overshadowed by an AI-powered justice system. However, this story’s hero is a Police Detective, Chris Raven. Accused of murdering his wife, he is strapped to a chair in a mechanised courtroom to face an AI judge.

In this world, AI judges deliver all available media resources to defendants, plus 90 minutes in which to search, discover and present the evidence needed to prove their innocence – or be executed. While the protagonist works through his investigation, interacting with the outside world only through a complex system of holographic screens, the audience also experiences the story almost entirely from his extreme perspective.

DNEG’s creative approach for the project brought significant challenges. First, their team needed to manage a huge volume of screen content, while maintaining precise continuity across hundreds of shots. They also created the digital courtroom, a wide interior space that includes a server room beneath its glass floor, two adjacent laboratories and a futuristic computer set behind the AI judge.

Large-scale destruction shots across Los Angeles involved CG traffic, crowds, helicopters and police vehicles. Throughout, the DNEG team also carried out the lookdev and build of forensic crime reconstruction sequences, CG drones, facial recognition effects and environmental enhancements. Digital Media World had the chance to talk to VFX Supervisors Chris Keller and Simon Maddison about their work on the project, the production’s expectations and their approaches to the biggest, most interesting challenges.

Courtroom Environment and Drama

Like the story, the work centred on the courtroom, where the production made use of virtual production techniques. Chris Keller said, “Every time we are in the courtroom with the main character, the photography took place in a 360° virtual production volume, with a temporary courtroom environment projected all around him.

“We would generally replace the courtroom in the plates with 3D imagery in wide shots, but many medium and close-up shots could lean heavily on the plate photography with minimal cleanup. As well as the courtroom images, production also temped in animated screens that helped with eyelines and provided excellent interactive lighting.”

Because they had that temporary environment – which had been created in pre-production in Unreal Engine and was present inside the volume throughout the shoot – the broad design language of the courtroom was fairly well established in advance. Rather than a flashy sci‑fi set that steals the audience’s attention, their goal was to make the room feel like a “believable, near-future environment you could live in for most of the film,” said Chris.

Look and Mood

After principal photography, when they ingested the Unreal asset, then up-resed and refined it, these fundamentals stayed consistent. But they found there was definitely room to iterate on the design. “We spend a lot of time researching real-life data centres and quantum computers, which factored heavily into the final look,” he said. “We aimed for a dark, modern space with recognisable courtroom elements and enough tradition in the layout to make it feel legible, but allowed the technology to run the room.

“On top of that, the environment had to support two competing needs – keeping it dark enough to let the screen content read well, while allowing the two actors to stand out as hero subjects. A lot of the look and mood came from motivated, story-driven sources, like the glow from the data centre below the glass floor, the labs to either side, and lighting elements around the quantum computer area behind Maddox, the AI Judge.”

The large number of light sources in the room – the quantum computer behind Maddox, the ceiling lights the datacentre below the floor, the labs and, of course, the screens themselves, called for a special approach. Each of these elements were grouped into light IDs, and for wider shots it took a lookdev and rebalancing stage in comp to get a good read of the room while still keeping the moody, underlit look.

Municipal Cloud and Holographic Screens

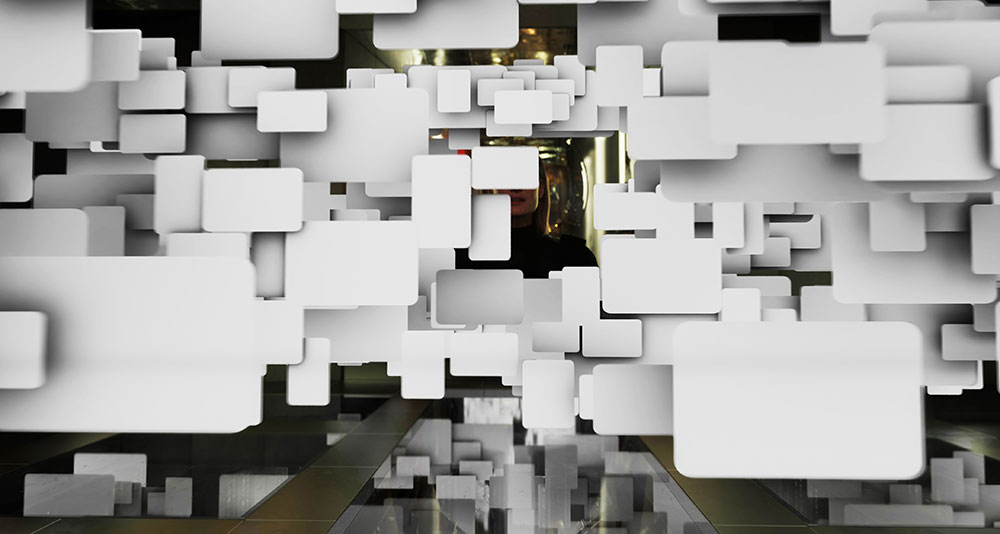

The visualisation of the municipal cloud was among DNEG’s most interesting challenges. More of an event than an object, it occurs at the moment when the entire database of video clips, recordings and other pieces of media loads around Raven for the first time, surrounding him with hundreds of screens. The result is both fascinating and threatening.

“In the brief, when the cloud first appears, it has to overwhelm not just the character, but the audience – but it couldn’t feel random,” Chris noted. “We only had a couple of shots to convey to the audience that the Mercy courtroom had access to a gigantic database of any footage and documents that exist in the LA municipality.”

This meant that a lot of information had to be on screen at once, which could easily have looked chaotic. Needing to give it structure, the team developed an internal sorting logic of hierarchical clusters where the first layer is location footage (CCTV, phone, bodycam), which can recursively spawn deeper layers of related detail (reports, records, documents), creating a kind of fractal organisation

Chris said, “The amount of content we were working with was massive. The wide shot of the municipal cloud shows over 1,000 different images and videos at once. As well as the layer of location footage, we had headshots, documents, plates from the shoot and more.

“Our Production VFX Supervisor Axel Bonami and his team worked continuously to gather hundreds of pieces of footage. Some came from the main shoot, some were supplied by the art department, some were sent in by friends and family, some were stock footage ... and it all had to be cleared legally.”

Ingest Unit

The organisational challenge was enough to warrant setting up a dedicated ingest unit to import and manage the material.

In terms of on-screen arrangement, the hierarchical set of rules mentioned above drove the layout. All of these techniques together prevented the cloud from feeling like random tiles in 3D space.

Creating, animating and managing the screens took a good mix of 2D and 3D processes. “Outside of the massive municipal cloud beat, which was achieved in 3D, our screens were accomplished using an advanced Nuke setup,” Chris said. “Mercy was the first DNEG show to utilise Nuke 14 because of its USD workflow and the ability to easily manipulate geometry even after the initial 3D layout had been finished. It meant we could save final layout and animation tweaks for compositing.

“Regarding the screen look, we developed a complex UI interface setup that included a frosted glass frame, bevels, reflections and diffusion from the footage onto the glass screen. But, although the vast majority of our screens were achieved in Nuke, it became clear very quickly that the municipal cloud moment needed a full 3D solution because of the sheer number of elements that all had to interact with each other and the room.

“So we had to take our 2D lookdev and replicate it in RenderMan. This included writing shaders for the frosted glass frames, diffusion and reflections. Again, we had to split everything out into IDs to give the compositors control over the final look to make sure things weren’t getting too busy.

“Further to that, whenever we were dealing with more than 10 screens at once, we built the cloud as a procedural 3D system in Houdini, instancing content onto points from our database so we could iterate quickly at scale. Art direction was baked into the setup, meaning we could remove duplicates, replace footage, speed up or slow down certain screens, and place hero clusters for the foreground.

Automating Continuity

Their finely balanced pipeline with its dedicated ingest unit meant they could import footage or graphics and generate templated Nuke scripts to execute the bulk of the screen work, including lighting/shading treatments, in a consistent way.

Continuity is paramount in Mercy because the fast-paced story is essentially experienced in real time, from Raven’s viewpoint through holographic screens. Therefore, the screen content is important as it constantly informs and alerts the viewers of where they are in the story.

They created around 700 shots featuring screens, with over 3,000 individual elements. The UI had to remain consistent with the narrative chronology and with itself across a huge number of shots. This meant close collaboration with editorial and frequent changes to the courtroom’s guilt meter and timer as the edit evolved, which they had planned for in the setup.

Many of the courtroom shots are very dynamic. Moving elements in the room might include Chris Raven, screen content and the municipal cloud elements – plus camera moves. Much of the choreography was about honouring what was already baked into the performance on the day. “Our approach was to keep building around the timing in the plates as much as we could, even as the final content and layout of our screens evolved in post. In the few cases that we had to shift timing, we applied selective relighting, but the goal was always to design the final VFX in a way that still feels like it’s driving the same performance beats you see in the plates,” said Chris.

“Wide shots in the courtroom would generally start by extracting Raven and the chair from the plate. Occasionally, a blue patch was projected behind him to aid with the extraction. Outside of that blue patch, the rest of the volume would show the projected courtroom environment, which helped greatly when replacing it with the CG one.

“The base of Chris Raven’s chair was replaced to help integrate it with the CG floor, and we also had a digi double in the chair that we used to render reflections in the floor.

Somewhat different, the Maddox elements were shot on blue screen, which we keyed, treated in 2D to achieve a virtual human’ look, and placed on a 2.5D card. This received the same frosted glass look as our other screens, and sometimes 2D relighting motivated by story beats.

Stuck in Traffic – Destruction, Crowds, Vehicles

At a certain point in the story, the POV leaves the courtroom and the action descends into an extended, complex sequence of heavy destruction. VFX Supervisor Simon Maddison said, “This sequence was quite long, playing out across the entire final act of the film. Not only did we need to create CG traffic that was all rigged for destruction, but also an entire city full of homeless camps and crowds of people, some requiring fully CG locations. Even the sky was full of delivery drones, a quadcopter ridden by one of the characters and a Black Hawk helicopter, complete with rappelling SWAT officers.”

Although DNEG has produced lots of memorable high-speed car chase and collision sequences in many of their earlier projects, what made this one different for Simon was that it required so many different approaches to complete. “That’s not even mentioning that we needed to create views of the same action from different perspectives at exactly the same time,” he said. “Each approach needed to work perfectly, not only looking real but also matching its accompanying view, even if one was shot in the volume and the other was shot on location with CG extensions.”

The production was able to shoot very early morning drone shots through the streets of LA with a real truck driving along a pre-described path. Doing this let them build up the sequence around real, recognisable locations on a real journey. The path the truck took was also shot with an array of cameras, producing a full 360 spherical moving environment, both from a tracking vehicle and a drone.

Cameras Everywhere

“So, because we had the arrays, even when we didn’t have footage of the truck, or we needed to change the action, we had a background plate ready to populate with digital assets. We also built some of the locations we needed entirely in CG, like the exterior of the courthouse and the alleyway leading up to that,” Simon noted.

“We had multiple options for putting shots together from different points of view – including the aerial shots. The character Jaq on the quadcopter, for example, was shot in a volume using those stitched background plates. Because the action was pre-described, production knew where she needed to be at which point in the chase, and that would become the projected background.

Likewise, if we needed to change location for any particular take, it was just a matter of replacing the background, and in some instances the reflections as well, in post.

Sometimes, if the camera was up with Jaq on the quadcopter, for instance, we could choose the section of the array shot with the drone to match the action happening on the ground in the shot before, after or even at the same time in a different window.

An especially effective aerial shot of a semi-truck, speeding down a street and colliding with other vehicles as a crowd rushes away, started with a plate shot of a practical truck driving down an early morning street in LA. “No pedestrians and no other vehicles were present except for a couple of police cars,” Simon described. “During the edit, Guy Norris and his visualisation team at Proxi VP did extensive post-vis on the shot, working out the story with the crowd and other vehicles, as well as motion capture for a lot of the performance, especially the more stunt-driven action.

“Once we had the shot in VFX, we tweaked some of that action, built up the layout of the street to get more specific with the festival, and created a crowd system using DNEG’s motion capture stage and tools in Houdini. All of the collisions and pedestrian action were generated in CG around the path of the real truck.”

Getting Airborne

The CG drones emerged from two different design approaches. One was to design what the team called the ‘logistics drones’ that populated the skies in some sequences, acting as airborne traffic. Simon said, “These designs were inspired by some real fixed-wing drones in existence today, and tailored to add a ‘near future’ vibe. We built a library of parts that could be mixed and matched to create an endless supply of variations, and a smart system was built to populate the skies in a logical way.”

In contrast, the police drones were created by production. The advantage for the artists working on this type was that it was created practically and rigged to a gimbal, allowing for some real motion in the shots. For authenticity, they studied real-world examples and made sure their animation conformed to what those aircraft can actually do.

In both cases, video reference of real vehicles was used extensively. The quadcopter was a distinctive example – large, heavy and composed of many small working parts with nimble, responsive motion. Simon said, “I remember one video in particular that formed the basis of how our quadcopter manoeuvres. We studied every frame of that video – how it moves forwards, backwards, and how it turns was all based on something that actually exists. In other words, our aircraft designs may have been ‘future-fied’, but what they can do was not.”

For DNEG’s team, this was one of the best aspects of Mercy and the director's vision for it. “The film conveys the ‘near future’, not a distant future. Everything we created was based on something that does exist today – if not yet in wide circulation – which meant you won’t see anything that we didn’t have a good, solid reference for,” said Simon.

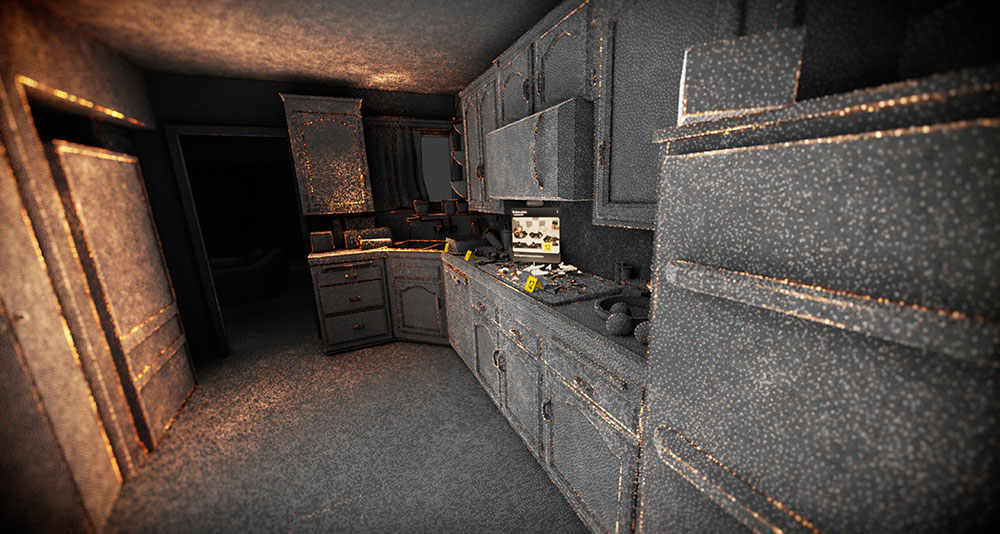

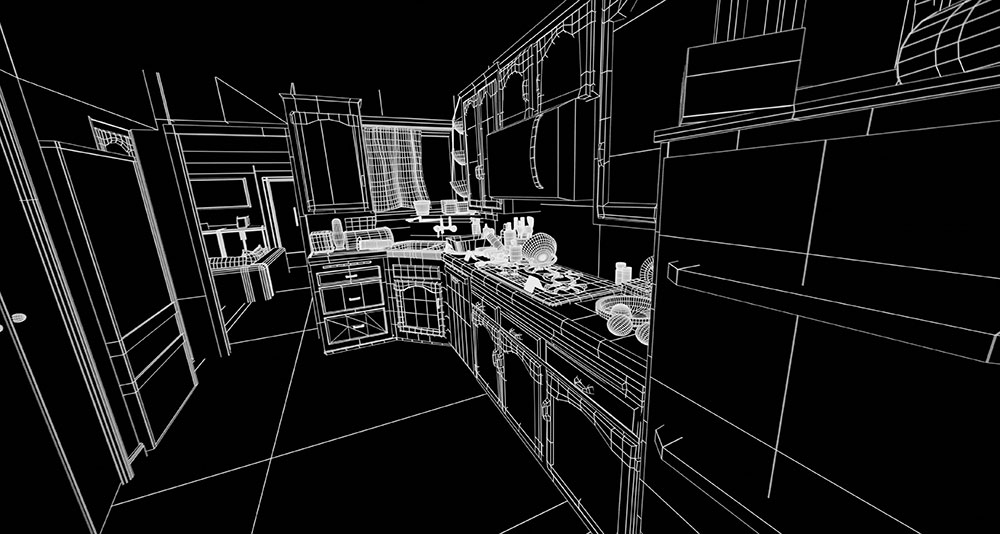

Forensic Crime Reconstructions

Among the tools available to the police department in this story are forensic crime reconstructions, which DNEG designed and built. Much like the courtroom, the lookdev goal for these shots was ‘grounded first, futuristic polish second’. “We studied real forensic tools and workflows, which use a combination of LiDAR and hand-modelling,” Chris said. “We tested both early on, but realised that these techniques would be too hard to art-direct cleanly, which was going to be essential. Real scanning data is inherently messy, and readability is of utmost importance in forensic reconstructions.

“What we ended up with was a cleaner point-cloud methodology. It took more manual work but was more controllable and art directable.

So, what you’re seeing is a carefully controlled point cloud that is instanced to proxy geometry of the set. We provided depth and point passes to comp, which were used to highlight certain areas or achieve the X-ray scanning effect via 3D masks and grades.”

The build approach started from clean proxy geometry of the house as the scene of the crime, followed by generating point cloud representations at different densities (light/medium/dense), which let them to choose what reads best per story beat. To keep the image legible, the point cloud was supported with additional render passes – occlusion, lighting and edges. Occlusion was especially important to prevent having to read multiple depth layers at once.

Much of the final look was then shaped in compositing, using depth/position data for depth of field. Even the scanning effects were animated in the shape of radial pulses in compositing.

Shot on iPhone

Interestingly, these shots didn’t start with the live action captured on set, but with footage shot vertically on a real iPhone as part of the narrative. During the transition to forensics, the artists would dynamically widen the aspect ratio as if drawing curtains apart, and fade to the 3D reconstruction.

Chris commented, “That transition was often quite tricky because the wide-angle lens on the iPhone would solve as a focal length of 7mm on our movie filmback, which created too much of a fisheye look. Extensive scans and reference photography of the set allowed us to reconstruct the entire house practically, as a textured 3D location, so we could move the camera freely once we were in 3D.”

The facial recognition effects are also rooted in current-day software before adding some sci-fi creativity to achieve the final look. “We based the points and mesh overlay on the output you get from existing facial tracking algorithms,” he said. “To achieve very precise alignment and animation with the faces and eyes, we created the tracking mesh geometry on a digital face in 3D. Then we tracked that mesh onto the character’s face using KeenTools in Nuke. Lastly, we animated the individual points and lines, progressively revealing sections to simulate the mesh being built vertically as the face is scanned. dneg.com

Words: Adriene Hurst, Editor

Images Courtesy of DNEG © 2026 Amazon, Inc